Crawling vs Extraction: What’s the Difference?

When people talk about collecting data from websites, terms like crawling and extraction are often used as if they mean the same thing. In fact, they describe two different parts of the process: finding the pages you need and pulling the specific information from them. Understanding this difference helps you choose the right approach and tools for your project.

Understanding the difference between crawling and extraction is especially important. One is about finding pages, the other is about pulling data from them. They solve different problems, require different techniques, and fail in different ways.

Let’s break them down clearly.

What is crawling?

Crawling is the process of discovering and fetching web pages.

A crawler starts with one or more starting points, often called seed URLs, and systematically follows links to find additional pages. Its goal is coverage: to visit as many relevant pages as possible while respecting limits like rate controls or site rules.

At this stage, the crawler doesn’t really “understand” the content. It isn’t looking for prices, names, or tables. It’s simply navigating the web, collecting page content, and keeping track of what has already been visited and what should be visited next.

You can think of crawling like walking through a library and gathering books. You’re not reading them yet - you’re just making sure you have all the material you’ll need later.

What is extraction?

Extraction happens after a page has been fetched.

Once you have the HTML (or rendered content) of a page, extraction is the process of identifying specific pieces of information and turning them into structured data. This might mean pulling product prices, article titles, contact details, or any other fields you care about.

Unlike crawling, extraction is about precision, not coverage. The challenge isn’t finding pages, but correctly interpreting their structure - especially when layouts change, content is dynamic, or pages are inconsistent.

If crawling is collecting books, extraction is reading them and writing down the exact facts you need in a spreadsheet or database.

The key difference between crawling and extraction

The simplest way to think about it is this:

Crawling answers the question: “Which pages should I look at?”

Extraction answers the question: “What data do I want from those pages?”

Crawling deals with links, queues, depth, and scale. Extraction deals with HTML structure, patterns, and data accuracy. One can exist without the other in some cases, but most real-world data pipelines rely on both working together.

How crawling and extraction work together

In practice, web data collection usually follows a sequence.

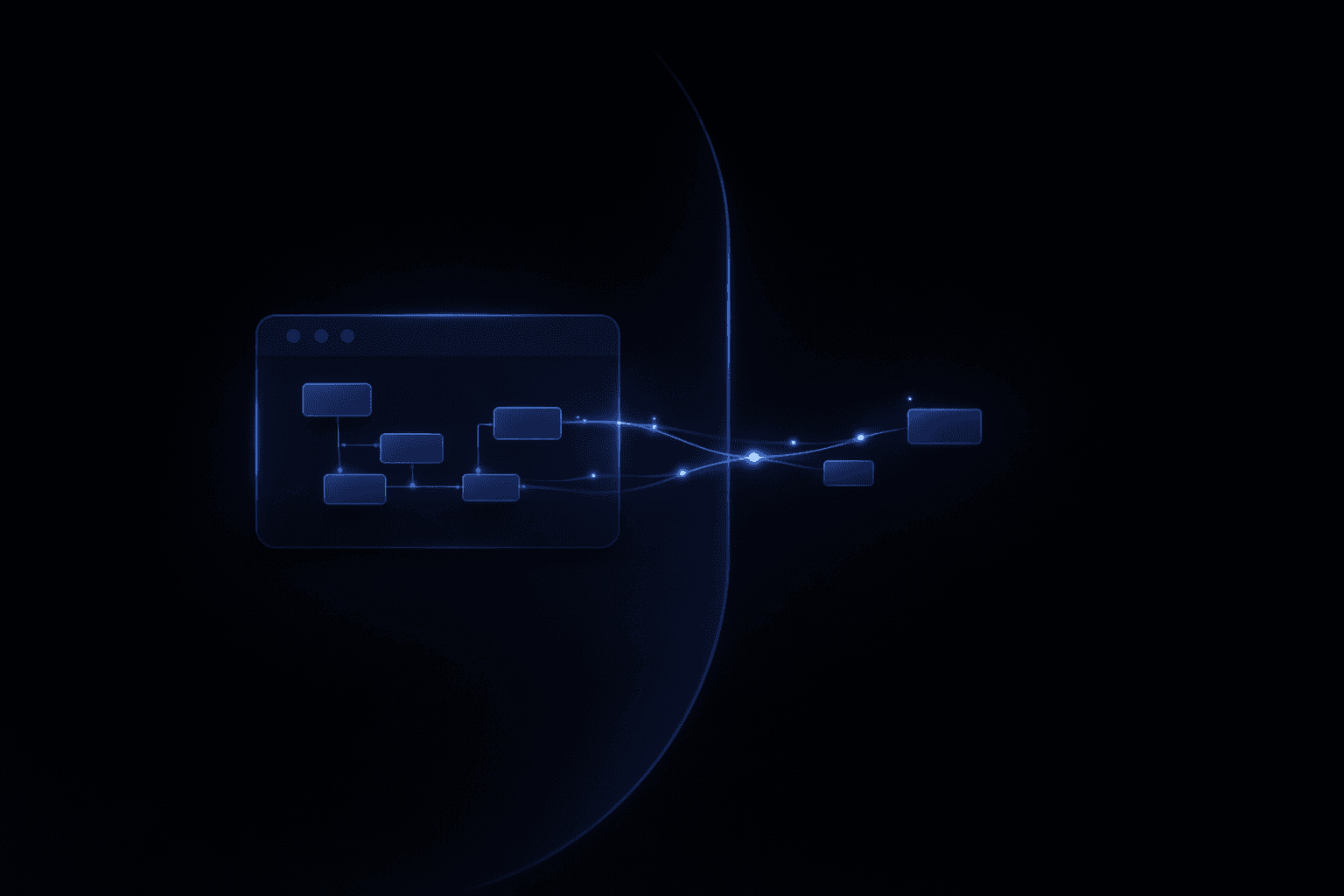

First, a crawler discovers and fetches pages across a site or multiple sites. Then, extraction logic runs on each fetched page to pull out structured data. That data is finally stored, indexed, or fed into downstream systems.

If crawling fails, you miss pages entirely.

If extraction fails, you get incomplete or incorrect data.

This is why treating them as the same thing often leads to fragile systems.

Common misconceptions

One common misconception is that extraction tools automatically handle crawling well. In reality, many extraction-focused solutions assume you already know which pages you want.

Another is that AI alone replaces crawling. While AI can help interpret content, it still needs pages to work on - and those pages still need to be discovered and fetched first.

Understanding these boundaries makes it easier to choose the right tools and design more reliable workflows.

When you need crawling, extraction, or both

If you’re analyzing a single known page, you may only need extraction.

If you’re monitoring hundreds or thousands of pages that change over time, you almost certainly need both.

Most production use cases - market research, price monitoring, content indexing, competitive analysis - depend on crawling for scale and extraction for accuracy.

Final thoughts

Crawling and extraction are not competing ideas. They are complementary steps that solve different problems in web data collection.

Once you understand the difference, it becomes much easier to reason about pipelines, diagnose issues, and evaluate tools. Instead of asking whether a solution “does scraping,” you can ask the more useful questions: How does it crawl? and How does it extract?

That clarity is the foundation for building systems that actually work.